Knowledge Byte: Benefits And Challenges of Virtualization

Cloud Credential Council (CCC)

This article does not seek to be the ultimate list of benefits and challenges to virtualization but only to provide a general overview of what it consists of and why it matters.

The Benefits of Virtualization

Cost Reduction: Partitioning and isolation, the characteristics of server virtualization, enable simple and safe server consolidation. Through consolidating, the number of physical servers can be greatly reduced. This alone brings benefits such as reduced floor space, power consumption, and air conditioning costs. However, it is essential to note that even though the number of physical servers is greatly reduced, the number of virtual servers to be managed does not change. Therefore, when virtualizing servers, the installation of operation management tools for efficient server management is recommended. Server consolidation with virtualization reduces costs of hardware, maintenance, power, and air conditioning. In addition, it lowers the Total Cost of Ownership (TCO) by increasing the efficiency of server resources and operational changes, as well as virtualization-specific features. As a result of today’s improved server CPU performance, a few servers have high resource usage rates but most are often underutilized. Virtualization can eliminate such ineffective use of CPU resources, plus optimize resources throughout the server environment. Furthermore, because servers managed by each business division’s staff can be centrally managed by a single administrator, operation management costs can be greatly reduced.

Improves Provisioning Speed: What once took hours can now be done in minutes. Not only can new servers be brought online quickly, but they can be broken down, rearranged, reassigned, and redeployed to suit the needs of the moment.

Improves Availability and Business Continuity: One beneficial feature of virtualized servers not available in physical server environments is live migration. With live migration, virtual servers can be migrated to another physical server for tasks such as performing maintenance on the physical servers without shutting them down. Thus, there is no impact on the end-user. Another great advantage of virtualization technology is that its encapsulation and hardware-independence features enhance availability and business continuity.

Increases Efficiency for Development and Test Environments: At system development sites, servers are often used inefficiently. When different physical servers are used by each business division’s development team, the number of servers can easily increase. Conversely, when physical servers are shared by teams, reconfiguring development and test environments can be time-consuming. Such issues can be resolved by using server virtualization to simultaneously run various operating system environments on one physical server, thereby enabling concurrent development and test of multiple environments. In addition, development and test environments can be encapsulated and saved, because reconfiguration is extremely simple.

Greater Flexibility: Virtualization allows you to run multiple types of applications and operating systems on the same hardware. You can configure a virtualized environment to support different operating systems for workstations, and run nearly any application to any machine.

The Challenges of Virtualization

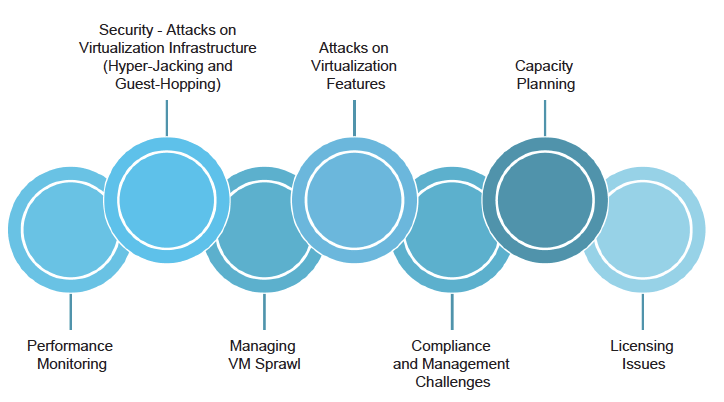

Performance Monitoring: Unlike physical servers, monitoring the performance of the virtual servers requires a different approach. Conventional CPU and memory utilization monitoring don’t work. In a virtual infrastructure, the VMs share the common hardware resources such as CPU, memory, and storage. If a host has hundreds of VMs, each of the VMs shares a certain percentage of these resources, the data processing works in tandem. To ensure the performance of a virtual infrastructure, a different set of performance metrics has to be monitored. Metrics such as CPU ready, memory ready, memory balloon, and swapped memory have to be monitored across all the VMs in real-time. In addition, live migration of VMs increases the monitoring complexity.

Security – Attacks on Virtualization Infrastructure (Hyper-Jacking and Guest-Hopping): In virtualized systems, the hypervisor is the single point of failure in security and if you lose it, you lose the protection of sensitive information. This increases the degree of risk and exposure substantially. For example, hyper-jacking involves installing a rogue hypervisor that can take complete control of a server. Regular security measures are ineffective because the operating system will not even be aware that the machine has been compromised. Pro-active (rather than reactive) measures such as hardening the environment are required. So the best way to avoid hyperjacking is to use hardware-rooted trust and secure launching of the hypervisor. VM jumping or guest-hopping is a more realistic possibility and poses just as serious a threat. This attack method typically exploits vulnerabilities in hypervisors that allow malware or remote attacks to compromise VM separation protections and gain access to other VMs, hosts or even the hypervisor itself. These attacks are often accomplished once an attacker has gained access to a low-value, thus less secure, VM on the host, which is then used as a launch point for further attacks on the system. Some examples have used two or more compromised VMs in collusion to enable a successful attack against secured VMs or the hypervisor itself.

Managing VM Sprawl: Since virtualization brought in the power of easily allocating the storage space to VMs, it led to both the uncontrolled growth of virtual machines as well as the overallocation of resources. In a typical virtual infrastructure, all of the available VMs are not used to full capacity, which wastes available resources. Hence, VM sprawl has become the Achilles’ heel to virtualization. To avoid VM sprawl, the virtual infrastructure has to be monitored continuously to identify VMs that are idle for a certain period, VMs that have resources overprovisioned or under-provisioned, and VMs that are not even powered on since they were provisioned. By removing such unused VMs – or optimizing the resources allocated to VMs – virtual sprawl can be greatly minimized. Resource optimization also avoids needless hardware purchases.

Attacks on Virtualization Features: Although there are multiple features of virtualization that can be targeted for exploitation, the more common targets include VM migration and virtual networking functions. VM migration, if done insecurely, can expose all aspects of a given VM to both passive sniffing and active manipulation attacks.

Compliance and Management Challenges: Compliance auditing and enforcement, as well as day-to-day system management, are challenging issues when dealing with virtualized systems. VM sprawl and even dormant VMs will make it a challenge to get accurate results from vulnerability assessments, patching/updates, and auditing.

Capacity Planning: Virtual infrastructure acts as the backbone of data centers that power today’s businesses. As a business grows, it is necessary to expand its underlying infrastructure also. Without proper capacity planning, the server management team will have a hard time scaling the infrastructure to meet business demands. Capacity planning also requires constant monitoring of the virtual infrastructure. A trend on the resource consumption by the VMs has to be identified and should be projected over a period of time. This helps the server administrator to understand the current trend and plan how many resources have to be added to handle the future load.

Licensing Issues: Software vendors license virtualization in different ways. Unfortunately, there is no industry standard for applying metrics to virtual scenarios. Some software companies try to ignore the issue and remain in the physical realm, while others create conversion models based on peak resource use or running instances. For example, one organization was running seven virtual instances on a server with four physical cores. Depending on which software they were using, they required seven licenses from one vendor – but only four from another.

Courses to help you get

results with Cloud

Cloud Technology Associate™ 4

The CCC Cloud Technology Associate™ certification demonstrates that candidates have the basic skill set and knowledge associated with cloud computing and virtualization. It delves into the enhanced capabilities of cloud computing when combined with the latest digitization trends and emerging transformative technologies. The guide highlights the important cloud challenges and risks, provides the corresponding measures…

Never miss an interesting article

Get our latest news, tutorials, guides, tips & deals delivered to your inbox.

Keep learning